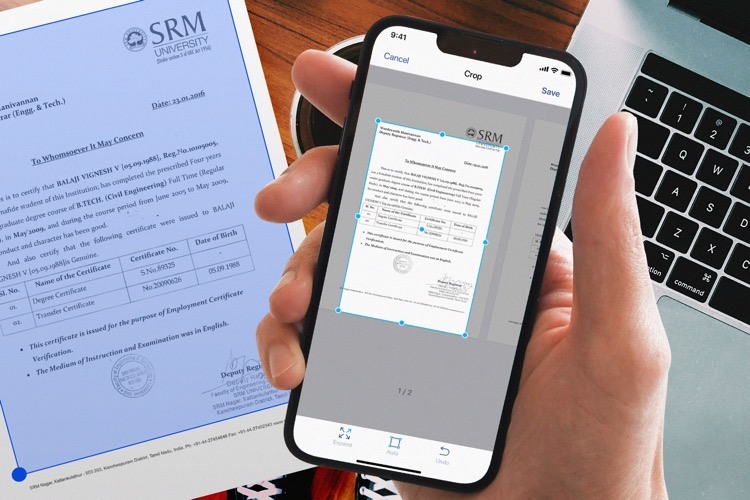

Flatbed scanners are getting obsolete and irrelevant. They are being replaced by smartphones and special applications that are becoming full-fledged substitutes for desktop devices. Automating processes with mobile scanning is more cost-effective for businesses of all sizes and is affordable even for micro organizations. Of course, you can make copies simply by taking pictures with a smartphone camera, but then you end up with a photo with an unnecessary background and additional artifacts.

The ideal solution, in this case, is a mobile scanning app that runs on artificial intelligence. Why is it so important for quality scanning? Artificial intelligence helps determine document borders and make perfect scans even in the most difficult conditions. Users rarely think about the factors that can affect the scanning result. Perspective distortions, lighting, color, and background texture—we can get around all of them with the help of neural networks. This helps users make scans automatically in 2 seconds instead of manually selecting a document, which takes more than 5–6 seconds.

Neural networks in apps: current challenges and what to expect from this field

The biggest challenge of implementing neural networks in apps is resources. State-of-the-art algorithms require a lot of computational power, while mobile devices sometimes can’t even load those algorithms. There are two solutions to this problem:

- Run networks in the cloud and provide a result to the user through the Internet.

- Use special networks that are suitable for mobile devices and run them on the device itself.

The first solution is more expensive because it requires app publishers to rent servers. Moreover, it works only when the Internet is available. However, it allows us to provide users with the most modern and least resource-consuming algorithms regardless of the hardware.

As for the second solution, it requires us to take into account the oldest devices compatible with our app and develop special networks that will work with them.

Neither of these solutions is the top choice. If you need the best accuracy possible, or if the algorithm is too resource-consuming, then the first option is the way to go. Opt for the second one if you need a solution that works just fine and doesn’t require an Internet connection. You can even combine those two into one by running one part of the network on the device and the other one in the cloud.

In the near future, our phones will become even more powerful, and deep learning researchers will develop even more efficient neural networks architectures, allowing us to run some of the best algorithms in the field on mobile devices. We will also be able to use the best cloud GPUs and send the result to the user through 5G. All of this will make the user experience flawless.

What is the market demand for neural network technology in mobile apps?

For small and medium-sized businesses, the need to increase efficiency and optimize costs remains high on the agenda (and this trend is only increasing every year). The need to quickly scan documents, checks and receipts is still there, but we don’t always have a flatbed scanner at hand. In addition, it is important to make high-quality scans without imperfections, which is an easy task for a mobile scanner based on a trained neural network.

During the pandemic, when people were away from their well-equipped workplaces, the issue of remote work with documents became quite acute. Therefore, a mobile app that allows an entrepreneur to organize remote work efficiently and send a high-quality document in a couple of taps is of tangible value to the user.

AI scanning mobile apps are used not only by entrepreneurs. The target audience of such applications includes users from various spheres:

- People working on the go (journalists, medical workers, merchandisers)

- Students (who not only need to scan but also quickly edit a document on the phone and then send it to a teacher via a messenger)

- School teachers and university professors

What is unique about mobile apps that run on their own neural networks?

The most difficult task for an app is to determine what exactly the user wants to scan. It all starts with the definition of the body and borders of a document in an image. Most scanning apps can’t detect boundaries accurately and automatically or make a lot of mistakes in the process. For example, figuring out where a table begins and a document ends is not a trivial task. It only gets more complicated if the paper is on a white table or, as is usually the case, on a stack of papers. This is where AI comes to the rescue.

To give you an idea of how artificial intelligence can be implemented in scanning apps, check out our app iScanner which runs on its own neural network. AI made it possible to deal with complicated scanning cases, such as damaged documents, images taken in low light, perspective distortions, multiple documents in the frame, other objects overlapping the main document, and so on. The most interesting and at the same time difficult thing is that often one image contains a combination of several or all of the above-mentioned factors. Once a neural network was introduced to iScanner, the accuracy of determining document borders increased from 62% to 97%. At the moment, more than 97.3% of the documents in the app’s dataset are detected with an error that is invisible to the naked eye.

Today, the need to get a high-quality scanned document within seconds using a mobile phone is the reality of the new world. Therefore, app developers should think not only about improving the quality of the scan but also about the additional features of an AI-powered app since there is a clear trend towards turning scanning apps into multifunctional platforms.